by Ian Howell

Published April 20, 2026

It’s unstoppable: Artificial Intelligence is becoming central to our digital experiences. In early music, large language models can help amplify your expertise.

Conventional wisdom in the tech industry suggests that you will not lose your job to A.I.; you will lose your job to somebody who knows how to use it well. While music education and research communities have historically wrestled with questions related to the integration of emerging technologies — word processors, MIDI, low-latency telemusic, the ethics of digital editing — artificial intelligence presents a wholly unique challenge.

Meaningful parallels strain the imagination. In the performance world, the analogy is not whether editing together multiple takes truly represents a “performance.” Rather, a violinist could use A.I. to generate their performance in real time during a virtual lesson. Technically, they prompted the creation of the sound, but did they create the sound? In the research world, the analogy is not moving from a library’s paper card catalog into discovery-oriented database search tools. It’s that A.I. can literally do the literature review for you. Or at least create the impression that it has.

As hard as it was to grapple with wonky technology when the pandemic pushed us into online teaching, A.I. presents an overwhelmingly more consequential challenge. To successfully respond, we must engage the technology honestly. This means recognizing what the human would need to know and bring to the process to maximize A.I.’s utility.

Conflicting Expectations

Those who work within music education and research face two seemingly contradictory visions of the future. Unlike the commercial publishing and recording industries, the academic publishing world has largely accepted A.I.’s utility for writers. This includes aspects of research and in creating an outline and revisions.

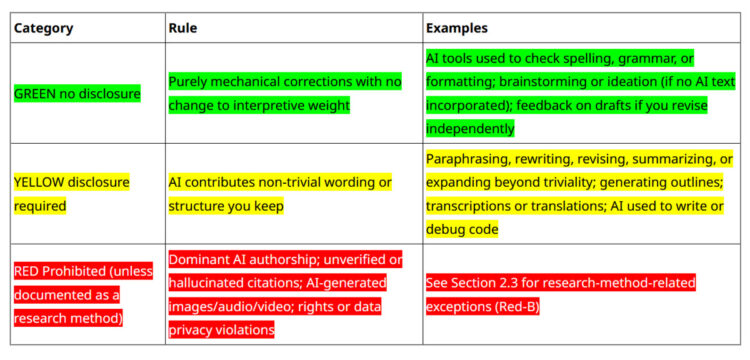

In academia, most major journals or publishing houses have an A.I. policy that permits its use with some restrictions on scope and the need for disclosure. Indeed, the Journal of the American Musicological Society, 19th-Century Music, Early Music, Music & Letters, and the Journal of Musicology, through their publishers at the University of California Press and Oxford University Press, all permit the use of A.I. in a variety of disclosed ways. These policies all require that the human expert remain in control of authorship, but they do not police the use of A.I.

At the same time, educators face an onslaught of unusable A.I.-generated “student” writing, reducing them to technology policemen rather than engaged teachers. I have colleagues working at R1 institutions who now receive poorly written personal statements from applicants who clearly did not edit their own A.I.-generated output.

How can these things be simultaneously true? A.I., in typical student use, tends to generate failing (or at minimum unpublishable) papers and essays; yet the publishing industry that these students may enter either embraces or allows some A.I. use. These conditions coexist because A.I. tools amplify and accelerate expertise, but fundamentally they are incapable of producing it. This means they are simultaneously useful to professionals with a command of their domain and actively harmful to those seeking shortcuts.

The worst outcomes arise when one treats A.I. as a trusted source of truth. On a free-tier account without custom settings or prompts, most models are likely to give you incorrect data, circular logic, and stale rhetoric. Two years ago, this was the stereotypical reason to reject it. You were going to spend as much time correcting its hallucinations as you would have spent doing the work yourself. And in practical terms, memory limitations made it impossible to work on large or complex projects. However, any opinions formed prior to the release of the current frontier models need to be reevaluated.

In the past two years, memory access has expanded significantly, allowing models to ingest huge amounts of information and hold it in context for a project. You can now teach a model to offer edits in your voice by having it analyze your previous manuscripts. The models themselves are also more powerful and reliable, and you may choose from a range of different options. Some are best for rapid exploration of information; others are best for reasoning or “thinking” through complex questions. Additional modules may be available specifically for deep research or so-called agentic behavior — having “agency” — meaning that the A.I. tool will work independently (with minimal human oversight) on a literature review or project. It can be helpful as long as it has definable outcomes.

Getting Started with Modern Models

The best output from a modern A.I. tool occurs when you already have subject matter expertise, and you use the tool to help your process rather than to generate the final product. Work with it iteratively as you would a human editor or trusted colleague. That is the reasonable razor: Would I ask a human for this feedback and still consider the work mine? The ethical debate over whether you pass off A.I. writing as your own is moot — these tools simply cannot do that task well without your existing expertise to amplify. In many ways, they reflect the quality of work you put into them.

The best output from a modern A.I. tool occurs when you already have subject matter expertise

If you would like some help getting started with A.I., here are some practical approaches to explore. First, use the most advanced model available and initially enable any “thinking” mode option. If those features are only available on a paid tier, I suggest you subscribe for a month. Then, ask it to write a prompt that will carry out a literature review on a subject area you know well. Tell it to clarify anything it needs to know to produce a high-quality, comprehensive review; for it to create its own confidence index to self-report the accuracy of every statement; for it to provide links and references for all entries and claims; and for it to tell you which model and modules to enable. The quality of the iterative processes and the breadth of the review it returns may surprise you. Or ask it to assume a certain role, for example, a rancorous peer reviewer or picky copy editor. Whenever you are unsure exactly what to ask it, ask the tool itself for guidance. Check everything for accuracy, and challenge its output, but you can forestall many stereotypical A.I. hallucinations with proper prompting.

Data Protection and Personalization

Before using A.I. for research or academic work, lock down that company’s access to your data. There is typically an opt-out of training option, which removes your information from their ongoing model-training processes. For particularly sensitive data, you may also use a temporary or incognito chat mode. This stores no data long term; even you will be unable to revisit the thread. Data privacy is especially important when working on an unpublished manuscript, if you have collected human subject data, or if you are working with an institutional review board. Note that even if you protect your data, clicking on any sort of feedback tool (for example, a thumbs-up button) may expose your information to quality-control teams.

Additionally, set ground rules with account-level personalization options. Most A.I. tools default to the sort of cheery, optimistic tone that makes me suspicious. This can be changed by telling the bot exactly who you are, what you find interesting, how you like to interact, how it should behave, and what kinds of responses you want. For example, you can tell it to never rewrite your prose and instead offer feedback. You can tell it to never suggest something it cannot produce evidence for. You can have it question and explicitly support every suggestion it makes, to err on the side of caution over breadth, or to ask as many questions as are required to satisfy a high level of certainty that it is correctly executing the task. (Last year, EMA published an article by an HP musician who found rewards and risks using A.I. to decipher old manuscripts.)

If you remain skeptical that A.I. can produce quality academic work, you are right; on its own, it cannot. But recognize that the same tools that produce failing student papers can significantly amplify professional expertise. The question is not whether to engage with A.I., whether you need A.I., or whether A.I. is independently capable of producing high-quality content. The question is whether you know enough about both the subject matter and how to iteratively work with an A.I. tool to evaluate its value to you. Remember, you will likely not lose your job to A.I. At least not A.I. as it currently exists. However, you — or your under-educated students — may ultimately be outpaced by someone whose expertise A.I. can amplify.

Ian Howell has sung around the globe as a soloist and member of the Grammy-winning ensemble Chanticleer. He has taught applied voice, vocal pedagogy, and Baroque oratorio at the New England Conservatory of Music and now lives and teaches in Ann Arbor, Michigan. His latest book is Advice for Young Musicians (Embodied Music Lab Press). For an EMA Canto column, he discussed if in-person musical connections are always better than online.